Discovery of Hidden Miscalibration Regimes

Kasia Kobalczyk, Mihaela van der Schaar

May 2026

Abstract

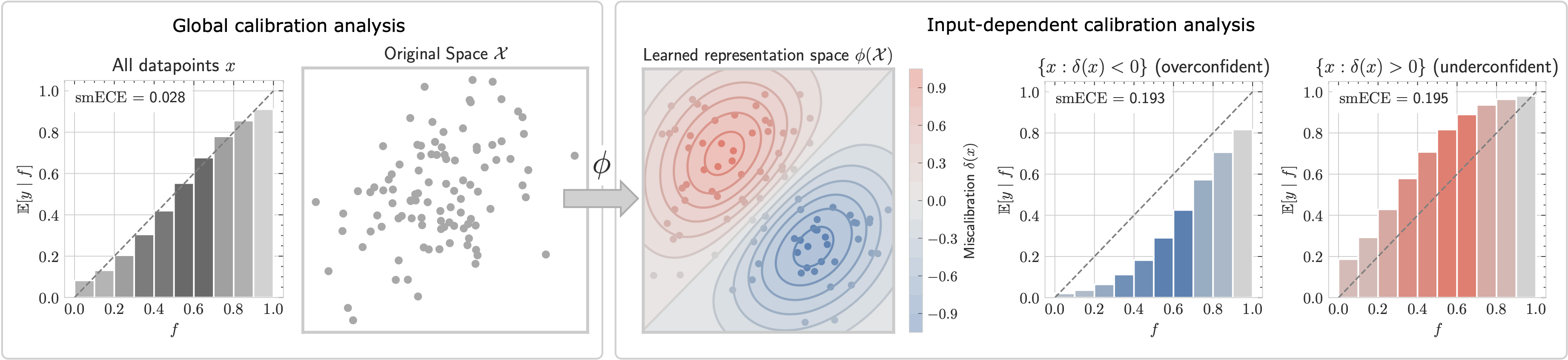

Calibration is commonly evaluated by comparing model confidence with its empirical correctness, implicitly treating reliability as a function of the confidence score alone. However, this view can hide substantial structure: models may be systematically overconfident on some kinds of inputs and underconfident on others, causing global reliability diagnostics to obscure localised calibration failures. To address this, we formulate the problem of discovering hidden miscalibration regimes without assuming access to predefined data slices. We define the corresponding miscalibration field and propose a diagnostic framework for estimating it. Our approach learns a calibration-aware representation of the input space and estimates signed local miscalibration by kernel smoothing in the learned geometry. Across four real-world LLM benchmarks and twelve LLMs, we find that input-dependent calibration heterogeneity is prevalent. We further show that the discovered fields are actionable: they support local confidence correction and reduce calibration error in systematically miscalibrated regions where confidence-based methods such as isotonic regression and temperature scaling are less effective.

- model calibration

- uncertainty quantification

- probabilistic machine learning

- LLMs